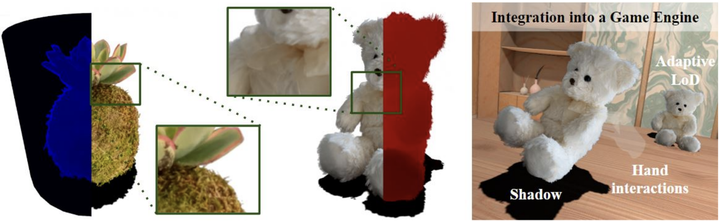

Neural Assets: Volumetric Object Capture and Rendering for Interactive Environments

Abstract

Creating realistic virtual ‘assets’ is a time-consuming process: it usually involves an artist designing the object, then spending a lot of effort on tweaking its appearance. Intricate details and certain effects, such as subsurface scattering, elude representation using real-time BRDFs, making it impossible to fully capture the appearance of certain objects. Inspired by the recent progress of neural rendering, we propose an approach for capturing real-world objects in everyday environments faithfully and fast. We use a novel neural representation to reconstruct volumetric effects, such as translucent object parts, and preserve photorealistic object appearance. To support real-time rendering without compromising rendering quality, our model uses a grid of features and a small MLP decoder that is transpiled into efficient shader code with interactive framerates. This leads to a seamless integration of the proposed neural assets with existing mesh environments and objects. Thanks to the use of standard shader code rendering is portable across many existing hardware and software systems.